Wouldn’t it be great if your Kubernetes services were directly routable, even from outside the cluster? For our Calico v3.4 release, we are excited to announce a new feature that supports advertising routes to service IP addresses in addition to pods. In this blog, we will discuss why this feature is useful, how it works, and provide an example of how to use it.

Why this is useful

In Kubernetes, access to services is handled by the kube-proxy component. The kube-proxy redirects any requests for a service to an appropriate endpoint (i.e. pod) backing that service. Service cluster IPs are typically only accessible from within the cluster, and external access to services requires a dedicated load balancer or ingress controller. However, Calico’s use of the Border Gateway Protocol (BGP) allows us to route external traffic directly to Kubernetes services by advertising Kubernetes service IPs into the BGP network, removing the need for a dedicated ingress mechanism in certain cases. This feature supports equal cost multi-path (ECMP) load balancing across nodes in the cluster, as well as source IP address preservation for local services. Now let’s jump into the details!

How it works

To enable this feature, set the following environment variable in the calico-node daemonset to the CIDR block reserved for services in your cluster (e.g., 10.96.0.0/12):

CALICO_ADVERTISE_CLUSTER_IPS=<Service CIDR>

Once set, all the nodes within the cluster will statically advertise a route to the range specified. Traffic to IPs within the CIDR will then be load balanced across nodes in the cluster using ECMP routing, at which point it will be forwarded to an appropriate pod within the service.

Calico respects the externalTrafficPolicy in the Kubernetes service spec. Services of type “Cluster” will be handled by route above, ensuring traffic is load balanced across all nodes in the cluster. Services of type “Local” will be advertised as a /32 route, and only from the nodes running a pod backing that service. As a result, traffic to local services will be routed directly to nodes hosting that service. The most significant outcome of this behavior is that inbound traffic to “Local” services will have its source IP preserved by the time it reaches the pod. In contrast, traffic forwarded to a “Cluster” type service will have its source IP masqueraded by the kube-proxy.

Example in action

The following example assumes you have a Kubernetes cluster with Calico installed, and BGP peerings configured between Calico and your infrastructure. It walks through how to enable and use the new service cluster IP advertisement feature.

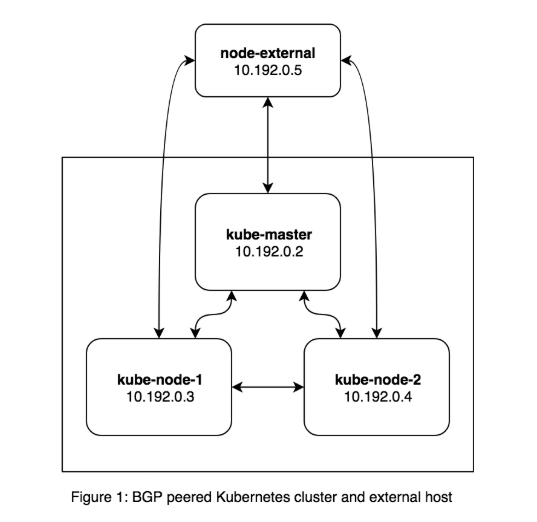

For the purposes of this example, I used a 3 node Kubernetes cluster with a fourth host that is not part of the Kubernetes cluster. I ran BIRD on the fourth host and peered it with the Calico nodes running in the cluster so it could learn routes. It looks something like this.

First, let’s enable the feature on the cluster. We can do this by updating the calico/node daemonset with the service cluster IP range for the Kubernetes cluster. If you’re not sure what this is, you can find the correct value by checking the –service-cluster-ip-range argument passed to the Kubernetes apiserver. The service CIDR for my cluster is 10.96.0.0/12, so I ran the following command on the master.

kube-master~$ kubectl -n kube-system patch daemonset calico-node -p '{"spec":{"template":{"spec":{"containers":[{"name": "calico-node", "env":[{"name":"CALICO_ADVERTISE_CLUSTER_IPS","value":"10.96.0.0/12"}]}]}}}}'

Once complete, you should see service routes advertised from Calico. Here’s what it looks like on node-external in my cluster. The highlighted routes show the service range being advertised by Calico.

node-external~$ ip r default via 10.192.0.1 dev eth0 10.96.0.0/12 proto bird nexthop via 10.192.0.2 dev eth0 weight 1 nexthop via 10.192.0.3 dev eth0 weight 1 nexthop via 10.192.0.4 dev eth0 weight 1 10.192.0.0/24 dev eth0 proto kernel scope link src 10.192.0.5 192.168.135.128/26 via 10.192.0.3 dev eth0 proto bird 192.168.169.128/26 via 10.192.0.4 dev eth0 proto bird 192.168.221.192/26 via 10.192.0.2 dev eth0 proto bird

The lines that begin with nexthop tell us that these are ECMP routes since they all have equal weights. Now let’s start up a nginx deployment, create a service for it, and try to access it from the external node.

kube-master~$ kubectl create deployment nginx --image=nginx deployment.apps/nginx created kube-master~$ kubectl create service nodeport nginx --tcp 80:80 service/nginx created

Assume that the pod runs on kube-node-1 and that the service has been assigned an IP address of 10.96.0.1. Since 10.96.0.1 falls within the IP range 10.96.0.0/12, the route to it is now accessible by any externally peered host (i.e. node-external). Let’s try to access the service from node-external.

node-external~$ nc -zv 10.96.0.1 80 Connection to 10.96.0.1 port 80 [tcp/http] succeeded!

It is worth noting that the nginx service is accessed on port 80 instead of a 32000+ port like a normal NodePort service. Now let’s set the nginx service’s externalTrafficPolicy to Local and see what happens.

kube-master~$ kubectl patch service nginx -p '{"spec":{"externalTrafficPolicy":"Local"}}'

service/nginx patched

Once this service is updated we can look a the routes on node-external and see that it now has an explicit route to the nginx service (10.96.0.1).

node-external~$ ip r default via 10.192.0.1 dev eth0 10.96.0.0/12 proto bird nexthop via 10.192.0.2 dev eth0 weight 1 nexthop via 10.192.0.3 dev eth0 weight 1 nexthop via 10.192.0.4 dev eth0 weight 1 10.96.0.1 via 10.192.0.3 dev eth0 proto bird 10.192.0.0/24 dev eth0 proto kernel scope link src 10.192.0.5 192.168.135.128/26 via 10.192.0.3 dev eth0 proto bird 192.168.169.128/26 via 10.192.0.4 dev eth0 proto bird 192.168.221.192/26 via 10.192.0.2 dev eth0 proto bird

Since there is only one pod running that backs the nginx service, there is only one route for node-external to access the service. Now let’s scale this deployment to 2 pods so that two nodes are running a nginx pod, and then print the routes again.

kube-master~$ kubectl scale --replicas=2 deployment/nginx deployment.extensions/nginx scaled node-external~$ ip r default via 10.192.0.1 dev eth0 10.96.0.0/12 proto bird nexthop via 10.192.0.2 dev eth0 weight 1 nexthop via 10.192.0.3 dev eth0 weight 1 nexthop via 10.192.0.4 dev eth0 weight 1 10.96.0.1 proto bird nexthop via 10.192.0.3 dev eth0 weight 1 nexthop via 10.192.0.4 dev eth0 weight 1 10.192.0.0/24 dev eth0 proto kernel scope link src 10.192.0.5 192.168.135.128/26 via 10.192.0.3 dev eth0 proto bird 192.168.169.128/26 via 10.192.0.4 dev eth0 proto bird 192.168.221.192/26 via 10.192.0.2 dev eth0 proto bird

Note there are now two ECMP routes to the nginx service, since it is running on two nodes.

Summary

Calico v3.4 introduces the ability to advertise Kubernetes service cluster IP routes over BGP, making Kubernetes services accessible outside of the Kubernetes cluster without the need for a dedicated load balancer. This feature can be enabled by specifying the service IP block to advertise as an environment variable. Calico respects the service’s external traffic policy. For more details on how to administer this feature, please refer to the documentation.

We’re excited to see how this feature will get used by the community, and hope to gather feedback to help shape future development. If you’d like to get involved or provide feedback, please reach out over slack or open a github issue!

Join our mailing list

Get updates on blog posts, workshops, certification programs, new releases, and more!